Google Cloud Unveils Eighth-Generation TPU Chips and $750 Million Startup Fund to Challenge Nvidia

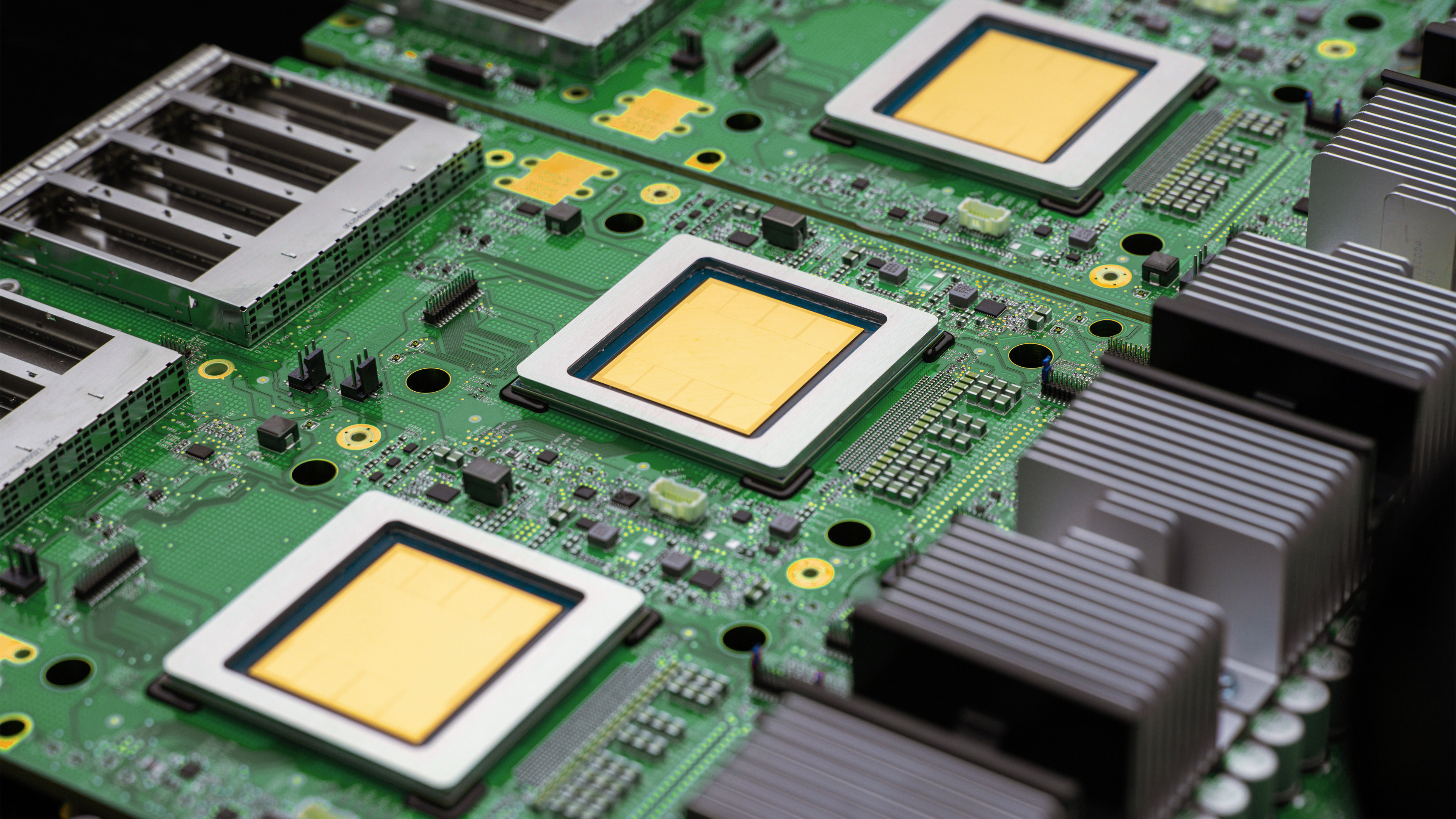

Google Cloud announced its most ambitious hardware push in years at its annual Next conference, unveiling two eighth-generation Tensor Processing Units designed to split the workloads of training and inference — the two dominant cost centers in modern computing. Alongside the chips, Google committed $750 million to a new fund supporting startups that build on its cloud infrastructure, a direct bid to pull developers away from Nvidia's ecosystem and Amazon Web Services.

Background

Nvidia has dominated the market for high-performance computing chips since the deep learning revolution took hold in the early 2010s. Its H100 and H200 GPUs became the de facto standard for training large language models, and the company's market capitalization surged past $3 trillion in 2024 as demand for its hardware outstripped supply. Google, which invented the Transformer architecture that underpins most modern large language models, has long sought to reduce its dependence on Nvidia by developing its own custom silicon through the Tensor Processing Unit program.

Previous generations of TPUs were primarily used internally by Google for its own products. The eighth generation represents a more aggressive commercial push, with Google positioning the chips as a cost-effective alternative for the broader market — particularly for inference workloads, which now account for the majority of compute spending as companies move from training models to deploying them at scale.

Key Developments

The TPU 8t is designed for large-scale model training, offering what Google describes as a 3x improvement in training throughput over the previous generation. The TPU 8i is optimized for inference, with a focus on latency reduction and energy efficiency — critical factors as companies run billions of queries per day through deployed models. Google claims the TPU 8i delivers inference at roughly half the cost per query of comparable Nvidia hardware, though independent benchmarks have not yet confirmed that figure.

The $750 million startup fund provides cloud credits, engineering support, and distribution opportunities through Google's enterprise sales channels. The fund targets companies building what Google calls "agentic" applications — software that can autonomously complete multi-step tasks on behalf of users. Google also announced a partnership with Qualcomm to develop on-device processing chips for smartphones, a deal that sent Qualcomm shares up 1% on the news.

Nvidia shares rose 4% to an all-time high on April 27, suggesting markets view the competitive landscape as large enough to accommodate multiple winners rather than a zero-sum battle between chip providers.

Why Americans Should Care

The competition between Google and Nvidia for dominance in high-performance computing has direct implications for American technological leadership and job creation. Both companies are headquartered in California's Silicon Valley, and their rivalry is driving billions of dollars in domestic investment in chip design, data center construction, and software development. Google's data centers — located in states including Iowa, Oklahoma, South Carolina, Virginia, and Nevada — employ thousands of workers and generate significant local tax revenue.

For American startups and small technology companies, the availability of cheaper inference computing through Google's new chips and startup fund could lower the barrier to building competitive products. A developer in Austin, Texas, or Raleigh, North Carolina, who previously couldn't afford Nvidia's premium hardware may now find Google's platform economically viable. That democratization of computing access has the potential to broaden the geography of the US tech economy beyond its traditional coastal concentrations.

Why It Matters

Google's hardware push reflects a broader strategic imperative: the company that controls the infrastructure layer of the computing stack captures a disproportionate share of the economic value generated by the applications running on top of it. Amazon Web Services built its dominance in cloud computing by offering infrastructure services that made it indispensable to thousands of companies. Google is attempting to replicate that model in the high-performance computing market by making its chips and cloud platform the default choice for the next generation of compute-intensive applications.

The geopolitical dimension is equally significant. The United States and China are engaged in an intense competition for leadership in advanced semiconductor design and manufacturing. Google's investment in custom silicon reduces American dependence on any single chip supplier and strengthens the domestic technology ecosystem. Taiwan Semiconductor Manufacturing Company, which fabricates chips for both Google and Nvidia, remains a critical chokepoint in the global supply chain — a vulnerability that both companies are working to reduce through investments in US-based manufacturing capacity.

What's Next

Google will begin making the TPU 8t and TPU 8i available to cloud customers in limited preview starting in May, with general availability expected in the third quarter of 2026. The $750 million startup fund will begin accepting applications in June. Nvidia's next-generation Blackwell Ultra chips are expected to ship in volume later in 2026, setting up a direct performance comparison that will be closely watched by enterprise customers making multi-year infrastructure commitments. The outcome of that competition will shape the economics of computing for the rest of the decade.

Sources: Ars Technica; Google Blog; TechStartups