Vercel Confirms Security Breach Traced to Compromised Third-Party AI Developer Tool

Vercel, the cloud platform widely used by developers to build and deploy web applications, has confirmed it suffered a security breach after a third-party AI tool called Context.ai, used by an employee, was found to have been compromised, exposing internal systems to unauthorised access.

Background

Vercel is one of the most widely used deployment platforms in the web development community, hosting applications for millions of developers and businesses worldwide. The company's infrastructure underpins a significant portion of the modern web, making any security incident involving its systems a matter of broad concern.

Context.ai is an AI-powered developer tool designed to help engineers understand and navigate large codebases. Like many AI tools integrated into developer workflows, it requires access to code repositories and internal systems to function effectively — a level of access that, if compromised, can create significant security exposure.

Key Developments

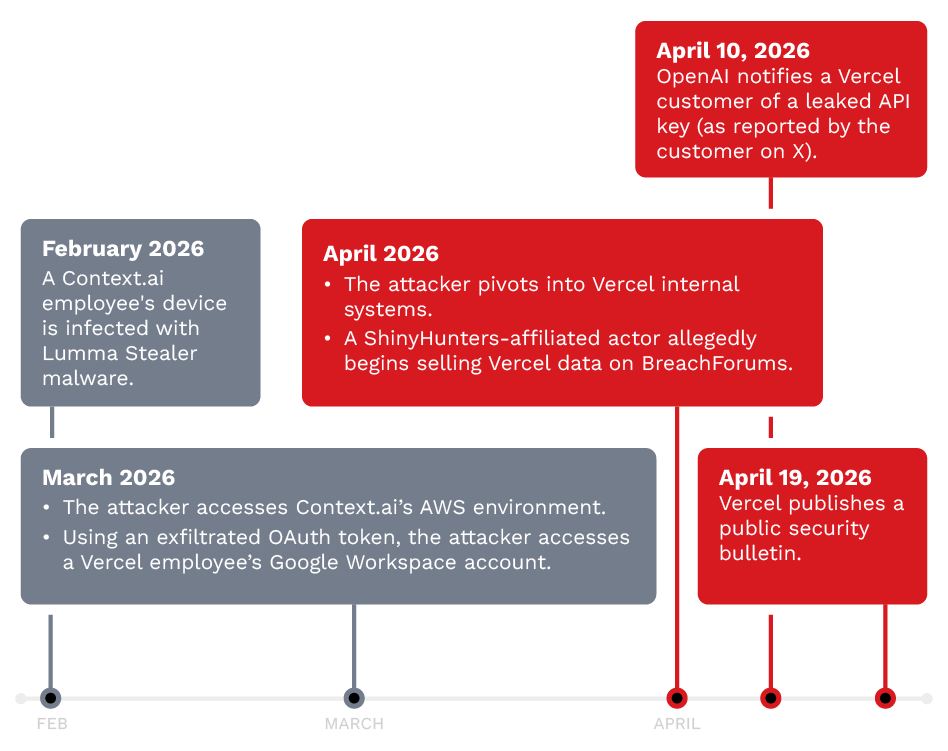

According to Vercel's disclosure, the breach occurred when Context.ai was compromised by a malicious actor, who then used the tool's existing access permissions to reach Vercel's internal systems. The company has not disclosed the full extent of the data accessed but confirmed it is conducting a thorough investigation and has revoked the compromised tool's access.

Security researchers have noted that the incident follows a pattern of supply chain attacks in which legitimate, trusted tools are weaponised to gain access to high-value targets. The use of AI tools in developer environments has expanded rapidly, often outpacing the security review processes that govern traditional software integrations.

Why It Matters

The Vercel breach highlights a growing and underappreciated attack vector: the AI tools that developers increasingly rely on for productivity. Because these tools often require broad access to code, infrastructure credentials, and internal documentation, a single compromised AI integration can provide attackers with a privileged foothold in an organisation's systems.

Security experts are calling for stricter vetting of third-party AI tools, more granular access controls, and regular audits of the permissions granted to AI-powered integrations in enterprise environments.

What's Next

Vercel has indicated it will strengthen its third-party tool review processes and implement additional monitoring for anomalous access patterns. The incident is expected to prompt broader industry discussion about the security governance of AI tools in software development pipelines. Affected customers have been advised to review their own security configurations and audit any third-party integrations.

Sources: TechStartups; Trend Micro Research